NTFS file system is by design case-sensitive, yet this option is disabled by default.

One needs to change the following option in Registry:

[HKEY_LOCAL_MACHINE\SYSTEM\CurrentControlSet\Control\Session Manager\kernel]

"obcaseinsensitive"=dword:00000001

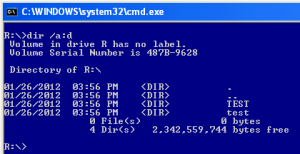

and restart the system to be able to create files and directories that are case-sensitive.

Notably, Windows APIs e.g. CreateDirectoryA/W are mapped to NtCreateFile API with OBJ_CASE_INSENSITIVE flag on, so they can’t be used to create case-sensitive files/directories.