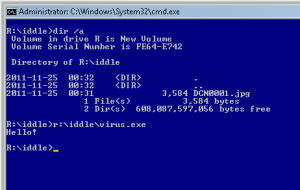

This is the answer to Riddle #2.

This one was an easy one as long as you are familiar with short file names; running a command “dir /x” would reveal a short name “virus.exe” associated with an executable file hidden behind a long name suggesting a JPG picture.

Thanks for trying & next riddle on Friday!